Serverless Cron Jobs with AWS Batch

AWS Lambda can only execute in a maximum of 15 minutes. For Cron Jobs that need more than 15 minutes, there is an AWS Batch service

Hi there 👋

I'm an Indonesian who loves coffee and dogs!! Also, code sometimes.

Currently studying at Kyoto College of Graduate Studies for Informatics. 🏫

Work as Full-Stack Engineer

When we think about Serverless Jobs in AWS, the first thing that comes to mind is AWS Lambda. AWS Lambda is a fantastic computing service that lets you run code without provisioning or managing servers. But AWS Lambda has some limitations; the function can only execute in a maximum of 15 minutes. For Cron Jobs that need more than 15 minutes, there is an AWS Batch service.

What is AWS Batch

AWS Batch is a set of batch management capabilities that enables developers, scientists, and engineers to quickly and efficiently run hundreds of thousands of batch computing jobs on AWS.

AWS Batch can be integrated with serverless container service (AWS Fargate) and set so that we only pay for what we use.

Build Batch

First of all, we need to build a working batch job.

Write the code

The easiest way to build a batch, in my opinion, is by creating a docker image. As long as it runs on a docker container, you can run any language in AWS Batch. In this example, we will be using python.

Dockerfile

To create a docker image, we need Dockerfile. We will create a simple python container and install the package to our requirements.txt.

./Dockerfile

FROM python:3.9

ADD . /app

WORKDIR /app

RUN pip install -r requirements.txt

VOLUME /app

Sample Code

For this example, we will run the downloaded package and test it if we can confirm it in the execution logs.

requirements.txt

requests==2.27.1

run.py

import requests

res = requests.get("https://blog.alvinend.tech/")

print(res.text)

Put Image in ECR

The next step is putting our image in ECR.

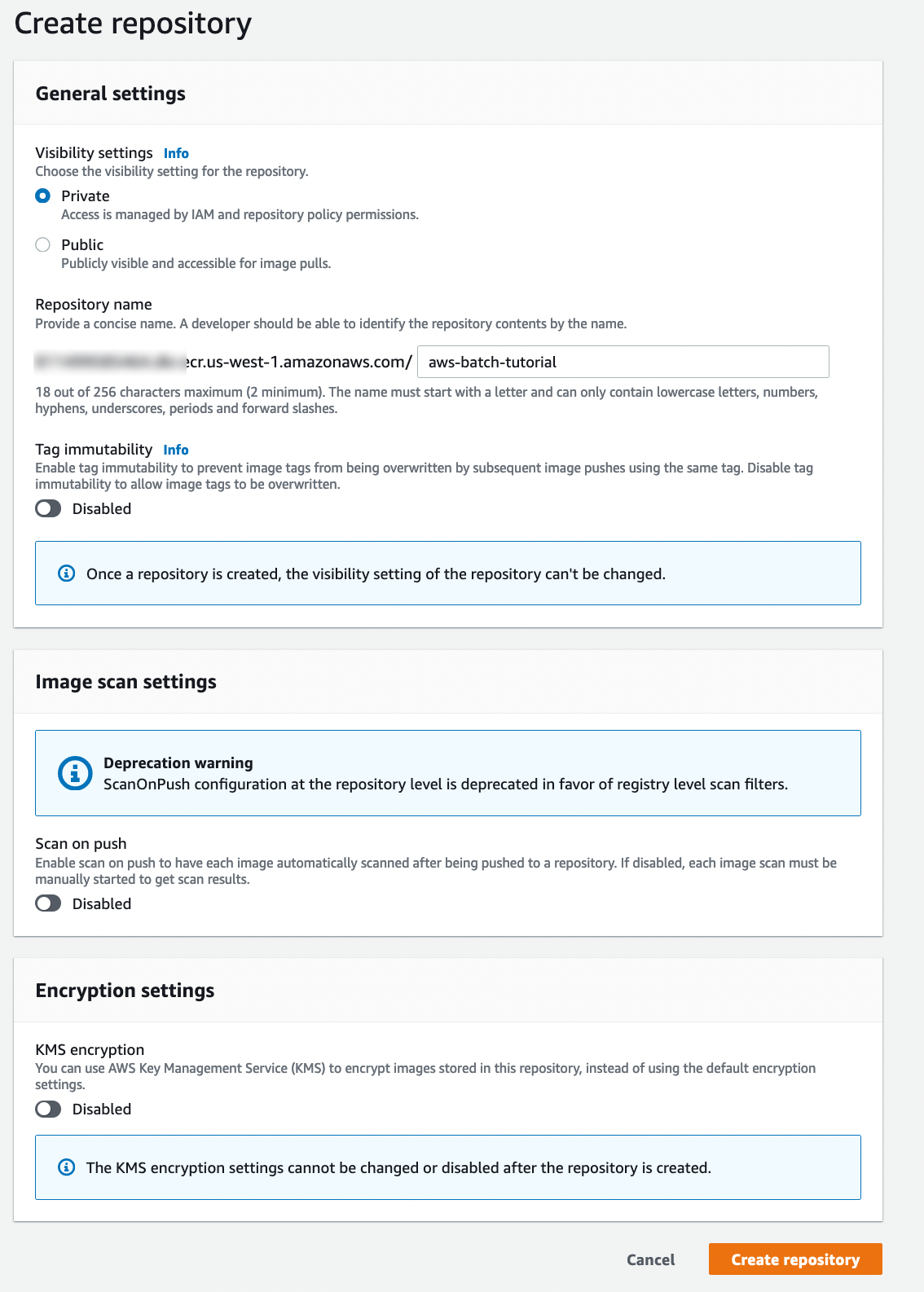

Create New Repository

- Go to AWS ECR (Don't forget to check your AWS region)

- From the sidebar, go to "Repositories" and click the "Create repository" button.

- Choose "Visibility Settings" to "Private," Enter the repository name.

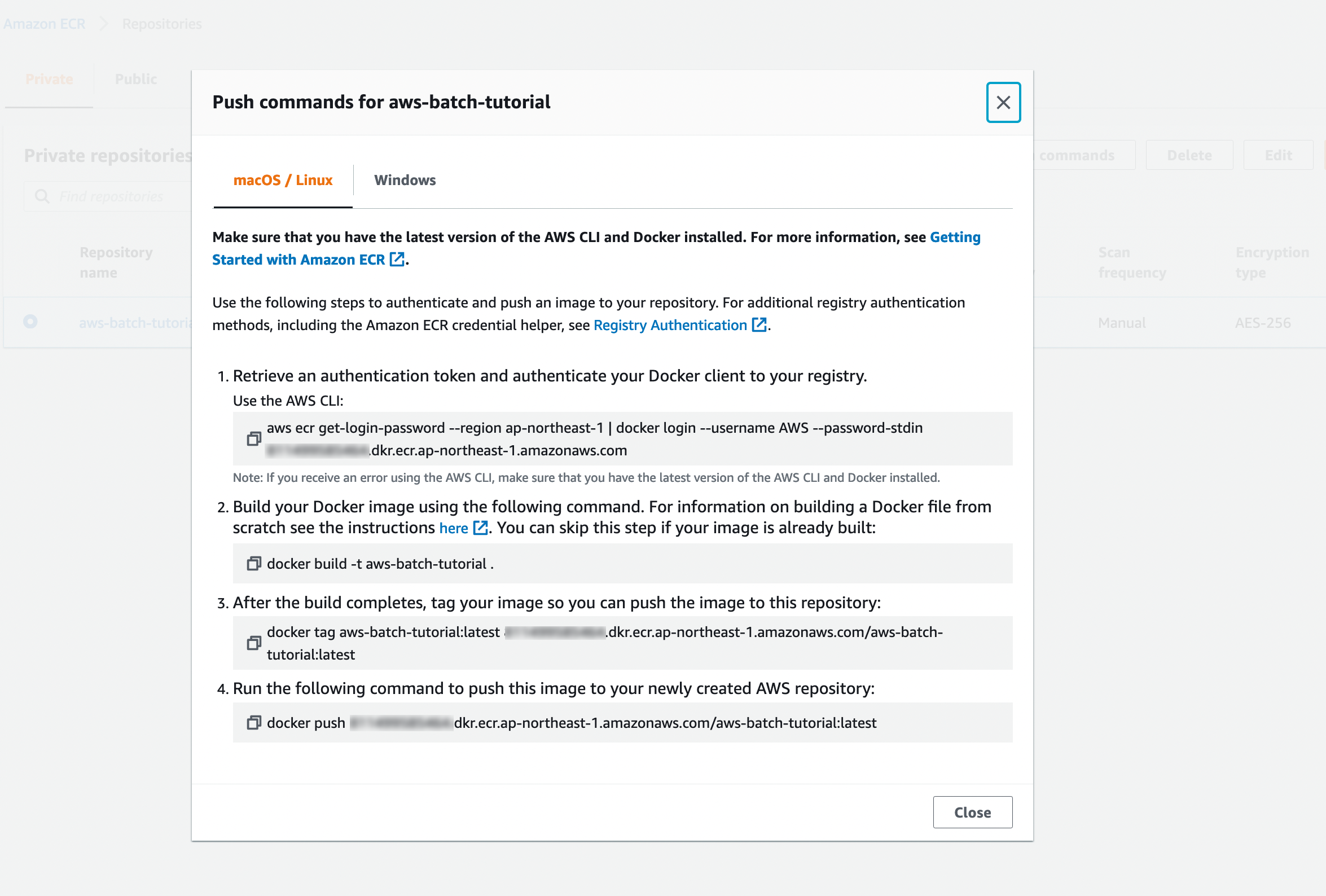

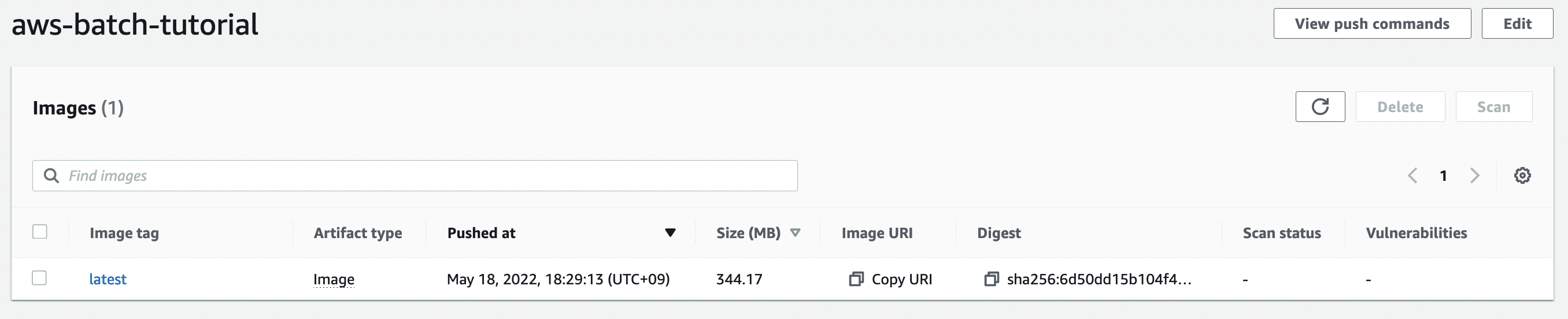

Push Image

Select the created repository and click the "View push Command" button.

Run those four commands and check if the repository is updated from the AWS console.

P.S. If you face any error while pushing the image, there is a good chance there are authentication problems. The link below might help you.

https://docs.aws.amazon.com/cli/latest/userguide/cli-configure-files.html

Execute Batch in AWS Batch

There are four steps before we can run our job.

Create Compute Environment

As the name suggests, Compute Environment is where our jobs will be executed. There are two types of Compute environments.

- EC2

- EC2 Spot

- Fargate

- Fargate Spot

If you choose EC2, AWS will start a new EC2 Server and run your job there. Fargate is an AWS Serverless container service. Spot is an option to use surplus resources for a low price in exchange for the risk that the resource might be suddenly unavailable. We will be using Fargate Spot.

- Go to AWS Batch Page

- Click "Compute Environment" from the sidebar

- Click the "Create" button

- Fill out Create Form

- For "Compute environment configuration."

| Key | Value | Explanation |

| Compute environment type | Managed | Let's make AWS do complicated stuff for us 😀 |

| Compute environment name | (anything) | Up to you 👍 |

| Enable compute environment | True | We want to use it! 👊 |

- For "Instance configuration."

| Key | Value | Explanation |

| Provisioning model | Fargate Spot | Fargate for serverless! Spot for a low price! 💰 |

| Maximum vCPUs | 1 | Only doing simple execution, does not need high compute power |

- For "Networking." Just leave it! Default VPC has a public subnet that can connect internet.

- Click "Create."

Just like that, we created our computing environment.

Create Job Queue

Jobs are submitted to a job queue where they reside until they can be scheduled to run in a Compute Environment.

- Go to AWS Batch Page

- Click "Job queues" from the sidebar

- Click the "Create" button

- Fill out Create Form

- For "Job queue configuration."

| Key | Value | Explanation |

| Job queue name | (anything) | Again, Up to you 👍 |

| Priority | 1 | Priority of this queue against other queues. Since we only create one, it doesn't matter. |

- For "Connected compute environments," select compute environment that we created before.

- Click "Create."

Create Job Definition

Job Definition is a blueprint for creating jobs.

- Go to AWS Batch Page

- Click "Job Definition" from the sidebar

- Click the "Create" button

- Fill out Create Form

- For "Job type," Choose "Single Node" just because we don't need parallel execution.

- For "General configuration," Enter any name you want and timeout of 300 (5 minutes).

- For "Platform compatibility," Enter Fargate with the latest version, Check "Assign Public IP," and execution role to default.

- For "Job configuration."

| Key | Value | Explanation |

| Image | YOUR_ACCOUNT_Id.dkr.ecr.YOUR_REGION.amazonaws.com/REPOSITORY_NAME:latest | Point it to your ECR repository |

| Command syntax | Bash | Because we love Bash 💖 |

| vCPUs | 1.0 | We don't need much |

| Memory | 2GB | Leave it to default |

And leave the rest to default.

- Click the "Create" button

Create Job

Preparation is done, and let us test-run it.

- Stay on the "Job Definition" Page

- Select created job definition

- Click on the "Submit new job" Button

- Enter job name (again, whatever you want) and choose the job queue that we created

- Click "Submit"

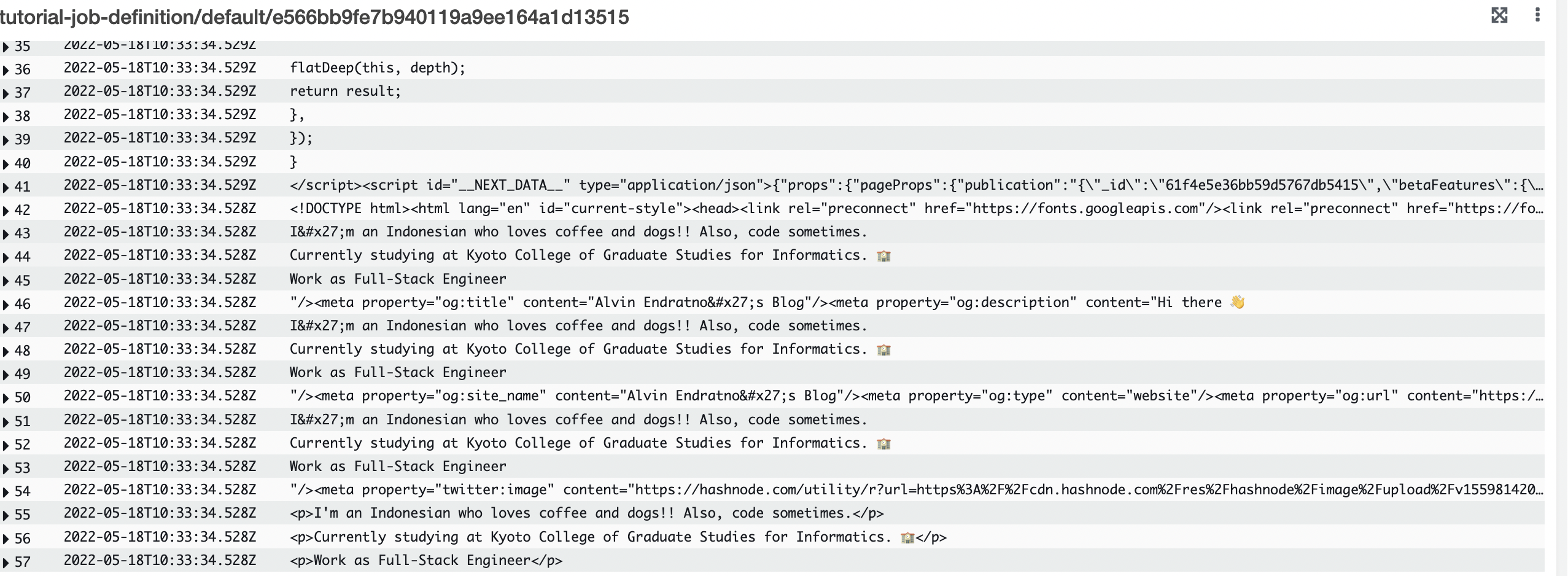

Congratulations ! you have created your first AWS Batch job 🎉. Let's sit back and wait for our execution logs (Hint, it's not real-time, and there are delays)

Logs:

Scheduled Jobs

The last thing we need to do is set a schedule because, without it, this will be a "job," not a "cron job." 😆

Set Rule in AWS Eventbridge

- Go to AWS Eventbridge

- Click the "Create Rule" button

- For "Define rule detail," Enter "Name" and "Description" with anything you like. Leave "Event Bus" to default and Rule type to "Schedule."

- For "Define schedule," Select what schedule you want to run the job. See AWS Schedule Expressions

- For "Select target(s)," Select target types to "AWS Service" and "Select a target" to "Batch job queue." Enter your resource ARN for Job Queue and Job Definition. Enter any name you like in the Job Name.

- Skip "Configure tags."

- Review and create

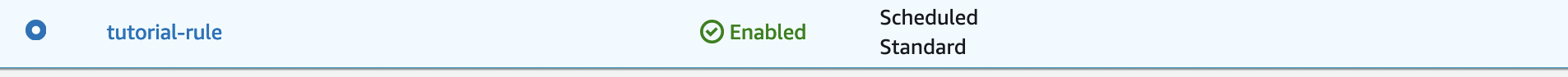

If done correctly, it will appear in the "Rules" list.

Ending

This time we created a serverless job in AWS. Compared to AWS Lambda, AWS Batch need some work to set up, but both have unique use cases, and in my opinion, no tool can fit all scenarios. Knowing many tools on the internet will help us in our journey. Besides, I am happy there are many tools we can use 😃.